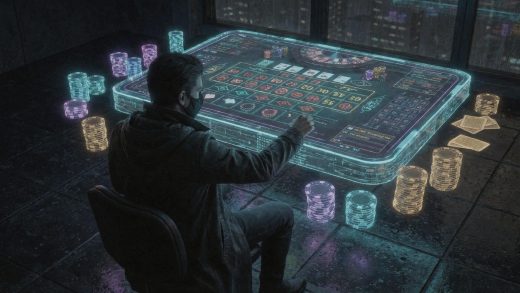

Neural Engines and Pixels: How AI Optimizes Casino Game Graphics in Real-Time

As a lead technologist for a premier global gaming collective in 2026, I have seen the traditional art of game design be completely rewritten by the advent of neural rendering. We are no longer in the era of static, pre-rendered backgrounds or rigid animation loops. Today, the visual experience is a living, breathing entity that adapts to the user’s hardware, connection speed, and even their playing style in milliseconds. The implementation of Real-time slots in 2026 is powered by sophisticated AI engines that reconstruct every frame, ensuring that whether you are on a flagship workstation or a budget mobile device, the visual fidelity remains uncompromising. My daily focus is on managing the intersection of high-end aesthetics and technical performance, ensuring that our games look like cinematic masterpieces while maintaining zero-latency responsiveness.

The Shift from Static Assets to Generative Rendering

Only three years ago, game developers had to create thousands of individual texture files for different screen resolutions. This was a cumbersome, “brute-force” approach to design. In 2026, we have moved to a generative model. Instead of storing 8K textures on a server and trying to stream them over a mobile network, we send low-resolution “base instructions” and let the AI on the player’s device or the nearest edge server reconstruct the details in real-time.

This process, often called neural upscaling, has evolved far beyond the early versions of DLSS or FSR. Our current AI models can take a 720p source and upscale it to 8K with such precision that it adds details that weren’t even in the original art. It can add the microscopic scratches on a gold coin or the complex light refractions in a crystal symbol, all while the reels are spinning. This allows us to offer incredible visual depth without requiring the user to download massive multi-gigabyte updates.

Real-Time Ray Tracing and Dynamic Lighting

Lighting is the soul of immersion. In a physical environment, light is chaotic and beautiful. Replicating that in a digital game used to require immense computing power that would melt a smartphone battery. AI has changed that. We now use “Neural Radiance Caching.”

How Neural Lighting Operates

Instead of calculating every individual ray of light, which is computationally expensive, our AI predicts how light should behave. It learns the geometry of the game environment and “fills in” the shadows and reflections. When a neon sign flashes in one of our futuristic-themed games, the AI calculates how that light hits the player’s virtual table, the glass of the slot machine, and the surrounding floor. This happens dynamically. If the game’s theme changes from day to night, the AI doesn’t just swap a skybox; it re-lights the entire scene in real-time, creating a seamless and atmospheric transition that feels grounded in reality.

Comparison: Traditional vs. AI-Optimized Graphics

| Technical Metric | Traditional Rendering (Legacy) | AI-Optimized Rendering (2026) |

| Texture Handling | Pre-stored, high-bandwidth assets | Generative, on-the-fly reconstruction |

| Lighting Model | Static lightmaps (baked shadows) | Dynamic neural ray tracing |

| Frame Consistency | High risk of “stutter” during peaks | AI frame-interpolation (perfect 120fps) |

| Data Consumption | High (Streaming large 4K files) | Ultra-low (Streaming low-res neural seeds) |

| Device Heat | Significant (High GPU load) | Minimal (Optimized neural core usage) |

Personalization of the Visual Interface

One of the most expert-level applications of AI in our 2026 platforms is the personalization of graphics. We have moved past “one size fits all” UI design. Our AI analyzes how a player interacts with the screen. If a player frequently looks at a specific corner of the interface, the AI can increase the level of detail and sharpness in that specific area (a technique known as foveated rendering, even outside of VR).

Furthermore, the “aesthetic style” of the game can adapt. If our algorithms detect that a player prefers a more minimalist, clean look, the AI can simplify the background clutter and sharpen the primary symbols in real-time. Conversely, for a player who enjoys high-octane, maximalist visuals, the AI can inject extra particle effects, bloom, and motion blur. We are essentially giving every player their own personalized art director who works in real-time to make the game look exactly how they want it.

Overcoming the Latency-Fidelity Tradeoff

The ultimate goal in 2026 is “Zero-Lag High-Fidelity.” In the past, you could have a beautiful game that was slow, or a fast game that was ugly. AI-driven frame generation has finally killed this tradeoff.

AI Frame Interpolation

When the network fluctuates, traditional games stutter. Our AI prevents this by generating “synthetic frames” between the real ones. If a packet is lost or the connection dips for a microsecond, the AI predicts what the next frame should look like based on the velocity of the moving objects on the screen. To the player, the motion remains fluid and perfect. This is particularly crucial in high-stakes environments where visual clarity and timing are everything. The AI acts as a visual buffer, ensuring that the human eye never perceives a technical hiccup.

The Role of Edge Computing in Visual Optimization

To make this happen globally, we don’t rely on a single data center. We utilize a distributed network of “Visual Edge Nodes.” These are small, high-powered AI servers located in major cities around the world.

When a player starts a session, the heavy lifting of the neural rendering is done at the edge node closest to them. The “last mile” of the connection only has to carry the final, optimized video stream. This allows us to deliver 2026-standard graphics to users who might be on older hardware or in areas with less-than-ideal internet infrastructure. We have effectively “democratized” high-end graphics, making the most beautiful gaming experiences accessible to everyone, regardless of their device’s price tag.

Frequently Asked Questions

What is neural upscaling in 2026 casino games?

Neural upscaling is a technology where an AI takes a low-resolution image and fills in the missing pixels to create a high-definition or even 8K image. In 2026, this happens in real-time, allowing games to look incredibly sharp without requiring high bandwidth or heavy processing power from the player’s device.

How does AI reduce battery drain while improving graphics?

Instead of forcing the main GPU to work at 100% to render every pixel, the AI uses specialized “Neural Processing Units” (NPUs) that are much more energy-efficient. These units handle the complex task of “guessing” the pixels and lighting, which uses significantly less power than traditional mathematical rendering.

Can AI-optimized graphics work on older smartphones?

Yes. Because most of the heavy optimization can be handled on the server side (Edge Computing) and then “streamed” to the device, even an older smartphone can display high-fidelity 2026 graphics. The AI handles the “translation” of the high-end visuals to a format the older hardware can display smoothly.

What is “Dynamic Asset Generation”?

This is when the AI creates textures and small details on the fly rather than loading them from a file. For example, the way light reflects off a virtual spinning wheel might be generated in real-time by the AI, ensuring that no two reflections are ever exactly the same, just like in the real world.

Does real-time AI optimization cause any input lag?

No. In 2026, the AI processing happens in the sub-millisecond range. In fact, AI often reduces perceived lag by using frame interpolation to smooth out any network stutters, making the game feel more responsive than a traditional non-AI game would.

How does the AI know my visual preferences?

The AI monitors subtle cues, such as where you tap, how long you stay on certain screens, and your hardware settings. Over time, it learns whether you prefer high-contrast visuals, simplified interfaces, or complex cinematic effects, and it adjusts the rendering engine to match those patterns.

Is real-time ray tracing possible on mobile in 2026?

Yes, through “Neural Radiance Caching.” Instead of traditional ray tracing that calculates every light path, the AI predicts the lighting based on pre-learned models. This allows for realistic reflections and shadows on mobile devices without the massive performance hit of legacy technology.

What is “Foveated Rendering” in the context of casino apps?

It is a technique where the AI identifies the most important part of the screen, usually where the action is happening or where the player is focusing, and renders that area in extreme detail. The peripheral areas are rendered at a lower resolution to save resources, though the AI makes this transition invisible to the human eye.

Does AI help with slow internet connections?

Significantly. AI can “reconstruct” a high-quality visual experience from a very thin data stream. If your connection drops in quality, the AI works harder on your local device to generate the missing visual details, ensuring the game doesn’t become “pixelated” or blurry.

What is the future of AI graphics in the next few years?

We are moving toward “Fully Generative Worlds,” where the entire visual environment is created in real-time based on the game’s narrative. Imagine a slot game where the background city or forest actually grows and changes the longer you play, with the AI designing every leaf and building as you go.

Conclusion

The transformation of casino graphics through real-time AI optimization has moved us into an era of unprecedented visual luxury. We have broken the chains of static design, allowing for an environment that is as dynamic and unpredictable as the games themselves. In 2026, the visual interface is no longer a barrier between the player and the game; it is an intelligent bridge that adapts, optimizes, and personalizes the experience in ways that were technically impossible just a few years ago. By leveraging neural upscaling, predictive lighting, and edge-based rendering, we have ensured that the “House” is not just a place to play, but a breathtaking visual masterpiece that is accessible to every person on the planet.

As we look toward the future, the boundary between the digital and the physical will only continue to blur. Our commitment is to remain at the absolute edge of this technological frontier, utilizing every advancement in artificial intelligence to push the limits of what a screen can display. We are no longer just rendering pixels; we are orchestrating light, shadow, and emotion in real-time. In this new world, the only limit to the visual experience is the imagination of our neural engines, and as those engines grow more powerful, the beauty and immersion of our gaming ecosystem will reach heights we are only beginning to explore.